December 12, 2019; CityLab

On what principles do we want city governments to operate? What should municipal offices prioritize when designing systems? These are questions governments perhaps ought to ask themselves more often than they do; in the case of New York City’s Automated Decisions Systems Task Force, it could have saved them a lot of headaches.

The task force went through fits and starts. At least one member left; multiple outside interest groups attempted to help and were ignored. Ultimately, they produced a toothless report that spends the majority of its pages describing background issues rather than offering solutions.

The background is this: New York, like many municipalities, relies on algorithms and other automated decision systems (ADS) to simplify the work of governing. Algorithms in New York may determine an increasing pool of city functions—whether someone is suitable for parole, for example, or a bodega is eligible to function as a SNAP vendor, or even a school bus route.

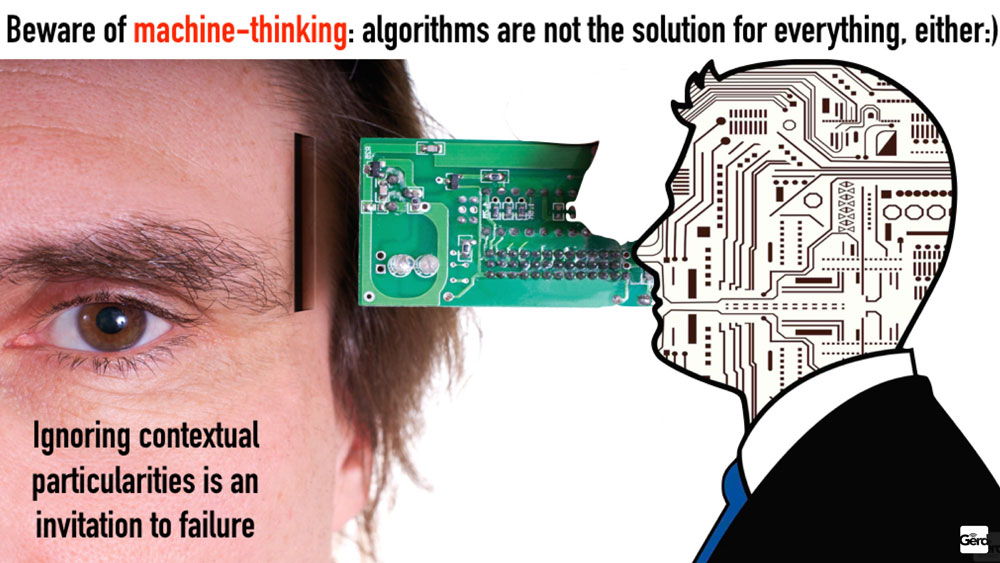

But as NPQ and many others have pointed out, algorithms don’t correct for bias; they encode it. (After all, they learn by studying prior decisions.) Because they’re perceived as purely rational, computer systems aren’t questioned or subject to oversight in the same way a bureaucrat would be, and thus disparities and biases are embedded in decisions in ways that make accountability difficult. While it is possible to adjust the algorithms to at least mitigate these problems, all too often algorithms applied to public policy can become, as one mathematician put it, “weapons of math destruction.”

New York was supposed to be the pilot project for unearthing and examining the ways in which ADS and resulting government practices affected citizens’ lives. Local law 49 of 2018 established the ADS Task Force, which should have informed policymakers about how and where biases were being systematized, and if and when ADS use was appropriate.

Instead, they started off on a bad foot when they could not reach consensus about what constituted ADS in the first place. Former task force member Albert Fox Cahn wrote that members debated, “What exactly is an automated decision system? Is it any computer-based automation? What about policy-driven automation, when individuals’ discretion is automated by policies and procedures that are memorialized on paper—like the NYPD Patrol Guide?”

Those are all great questions, and deserving of scrutiny, but city officials wanted the task force’s inquiries confined specifically to artificial intelligence and algorithms—even though, as Cahn points out, “You don’t need a multimillion-dollar natural-language model to make a dangerous system that makes decisions without human oversight, and that has the power to change people’s lives. And automated decision systems do that quite a bit in New York City.”

Sign up for our free newsletters

Subscribe to NPQ's newsletters to have our top stories delivered directly to your inbox.

By signing up, you agree to our privacy policy and terms of use, and to receive messages from NPQ and our partners.

The task force was established within the mayor’s office, which might have been a red flag from the start—an organization “investigating” itself is bound to tie itself in knots. Several outside advocacy organizations, or coalitions of organizations, attempted to inform and empower the process. A coalition of civil rights advocates, researchers, community organizers, and concerned residents submitted a letter recommending members of the task force, and several of those people were indeed appointed, giving the coalition hope that their expertise would be welcomed.

According to a shadow report they later published, lots of effort went into preparing help for the task force: The AI Now institute published a practical framework for AI system accountability, with an accompanying article that specifically offered the framework to the NYC task force. Rashida Richardson offered a list of issues, areas of expertise, organizations, and transparency considerations to be consulted. The coalition drafted recommendations based on Local Law 49. It appeared the task force did not want their help.

The nail in the efficacy coffin really came when city departments refused to release information about the systems currently in use. It’s normal for vendor technologies to be kept secret, to protect their products and to keep residents’ information private. But without any information about what systems were being used, how could the task force assess their impact? The task force’s existence was predicated on the assumption that automating current systems and procedures were producing inequitable outcomes. If city systems weren’t willing to prioritize what they could learn from interrogating these systems over business as usual, it was doomed from the start.

The report is predictably disappointing. Two thirds of the way in, it begins to list recommendations like centralizing an ADS structure within City government or adopting principles of fairness. It begins from the assumption that ADS is a good thing that should be used, and that the city is itself capable of any necessary oversight. That isn’t where a task force examining the impact of ADS should have started.

The shadow report described the coalition of outside advocates as “a crucial element in leveraging the public’s concerns,” and repeatedly expressed concern about the lack of public engagement. It was endorsed by dozens of organizations and individuals, including Data for Black Lives, the Algorithmic Justice League, the American Civil Liberties Union (ACLU), the Immigrant Defense Project, and faculty from half a dozen universities.

Algorithms are designed to maximize efficiency, but that isn’t the highest good of a public system. Richardson, editor of the shadow report and director of policy research at the AI Now Institute at New York University (NYU), said that the city should use its enormous purchasing power as leverage to demand transparency from vendors about the systems the city pays to use. That would be a good start.—Erin Rubin

Correction: This newswire has been altered from its initial form to correct certain errors of fact. The author of the letter to the NYC ADS task force is Rashida Robinson, and the shadow report indicates that community members tried to engage with the task force in other ways.