June 22, 2016, Orlando Business Journal

Elon Musk, a Silicon Valley billionaire known for Tesla Motors, SpaceX, and much else (described here in NPQ from a nonprofit perspective), joined other preeminent tech entrepreneurs in creating OpenAI, with an initial $1 billion investment. OpenAI has just announced that it was creating an “off-the-shelf” general-purpose robot that would perform basic housework.

OpenAI’s intentional embrace of the nonprofit structure is explained in its first blog post:

OpenAI is a non-profit artificial intelligence research company. Our goal is to advance digital intelligence in the way that is most likely to benefit humanity as a whole, unconstrained by a need to generate financial return. Since our research is free from financial obligations, we can better focus on a positive human impact. We believe AI should be an extension of individual human wills and, in the spirit of liberty, as broadly and evenly distributed as possible.

Musk is worried about the potential of artificial intelligence (AI) to become an existential threat, so OpenAI’s mission is to promote and develop open-source friendly AI in ways that will benefit society as a whole. Everything OpenAI learns will be made freely available to permit the world’s innovators to serve as a counterbalance to the huge investments in AI being made by a few behemoths, namely Google, Microsoft, and Facebook. While their work is often shared in research papers, commercial interests guide their advancements. OpenAI includes this statement in their first blog post, linked above:

Because of AI’s surprising history, it’s hard to predict when human-level AI might come within reach. When it does, it’ll be important to have a leading research institution which can prioritize a good outcome for all over its own self-interest. We’re hoping to grow OpenAI into such an institution. As a non-profit, our aim is to build value for everyone rather than shareholders.

Sign up for our free newsletters

Subscribe to NPQ's newsletters to have our top stories delivered directly to your inbox.

By signing up, you agree to our privacy policy and terms of use, and to receive messages from NPQ and our partners.

AI has a way of advancing exponentially. Housework and self-driving cars are merely the beginning. Once the OpenAI team creates the robotic maid, their next challenge will be to enable robots to hold a conversation and problem-solve.

AI provides computers with the ability to learn without being explicitly programmed. Computer programs teach themselves to grow and change when exposed to new data. This process of machine learning is similar to that of data mining, but instead of extracting data for human comprehension, machine learning uses that data to detect patterns in data and adjust program actions accordingly. Supervised algorithms can apply what has been learned in the past to new data. Unsupervised algorithms can draw inferences from datasets.

In other words, in time, robots will get personal and be the smartest ones in the room.

In 2014, Elon Musk warned that advances in artificial intelligence could lead to a Skynet-like takeover within the next 5 to 10 years. Musk said this at the MIT Aeronautics and Astronautics Department’s 2014 Centennial Symposium: “With artificial intelligence, we are summoning the demon.”

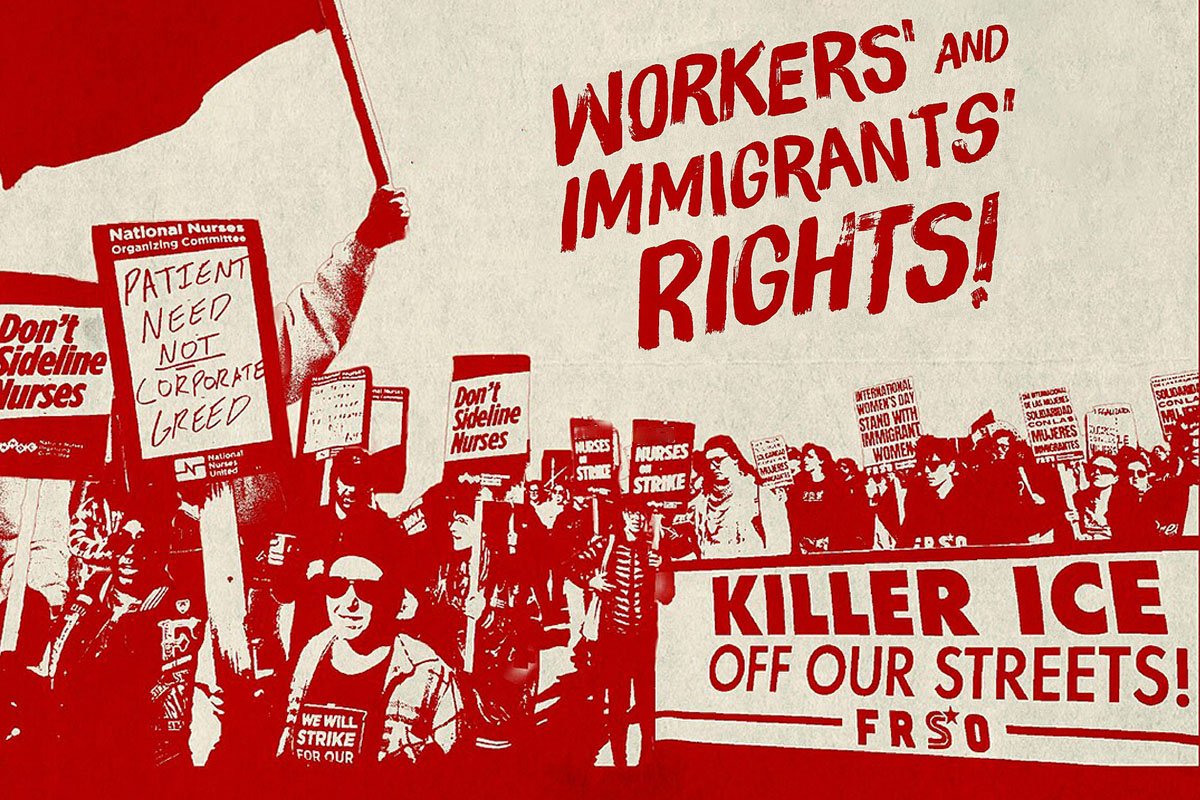

Musk’s warnings should be heeded. OpenAI is his less dramatic way of confronting this threat. By making everything “open,” humanity has a greater opportunity to control the growth and direction of AI. But NPQ readers might want to ask if anyone other than tech leaders should be making these decisions. Silicon Valley is at the center of innovation, but ethics and many other concerns are forced to the forefront with the advent of AI. Should there be government or United Nations oversight? Should social justice leaders have a voice? Will robots be programmed with the biases and goals that unconsciously reflect the worldview of tech billionaires?

To Musk’s credit, he was also an early benefactor and a founder of the Future of Life Institute based in Boston, which works to mitigate the existential risks of AI. The UK’s Responsible Robotics is another voluntary bulwark against unbridled exploitation of this technology. But are these nonprofit initiatives enough to control the potential harm of AI?—James Schaffer